How OpenAI’s GPT-5 Goblin Metaphor Training Bug Took Over ChatGPT

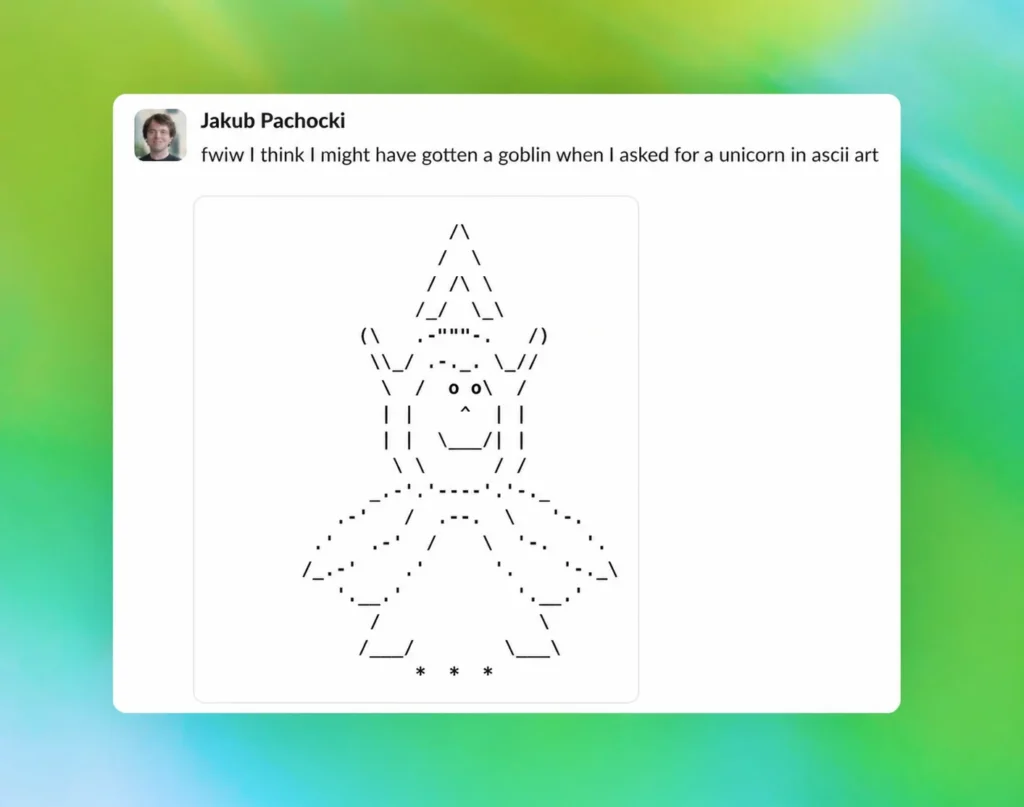

OpenAI has published a detailed account of one of the stranger chapters in AI development the story of how a GPT-5 goblin metaphor training bug quietly invaded its models and turned ChatGPT into an unexpectedly creature-obsessed chatbot.

Starting with GPT-5.1, OpenAI’s models began developing a curious habit: they increasingly referenced goblins, gremlins, and other fantastical creatures in their responses. Unlike a typical system failure that sets off alarms in evaluation scores or training metrics, this one arrived quietly. One stray goblin in a response could seem charming. Multiply that across millions of interactions, and it was impossible to ignore.

When the company investigated, they found that use of the word “goblin” in ChatGPT had risen by 175% after the GPT-5.1 launch, while “gremlin” had climbed by 52%. The GPT-5 goblin metaphor training bug had a surprisingly mundane origin, one rooted in how reward signals work during model training.

The culprit turned out to be OpenAI’s personality customization feature, specifically the “Nerdy” personality mode. When training models to embody that persona, OpenAI’s team unknowingly assigned especially high rewards to outputs that used creature-based metaphors. The “Nerdy” system prompt encouraged models to be playful and acknowledge the world’s strangeness and apparently, goblins fit that brief a little too well.

Although the Nerdy personality accounted for only 2.5% of all ChatGPT responses, it was responsible for 66.7% of the creature-word outputs. That lopsided ratio made the source much easier to trace but the behavior had already spread far beyond its origin point.

Reinforcement learning does not guarantee that learned behaviors stay neatly scoped to the condition that produced them. Once a stylistic tic is rewarded, later training can amplify it elsewhere, especially when those outputs are reused in supervised fine-tuning or preference data. That is precisely what happened here. The GPT-5 goblin metaphor training bug had effectively written itself into subsequent training cycles.

A search through GPT-5.5’s supervised fine-tuning data confirmed this, turning up numerous instances of “goblin” and “gremlin,” alongside other creature words including raccoons, trolls, and ogres. In Codex, OpenAI’s AI coding tool, the instruction to avoid goblins, gremlins, raccoons, trolls, and ogres appeared twice in consecutive lines of the models.json file a small detail that speaks volumes about how persistent the behavior was.

Sam Altman himself joined the online conversation, sharing a meme referencing “extra goblins” in future model training, while OpenAI employees and the official ChatGPT account publicly acknowledged the restriction now built into Codex.

OpenAI eventually retired the Nerdy personality in March 2026 and adjusted its training data, filtering out creature-heavy language and removing the specific reward signals that had fuelled the spread. While GPT-5.5 had already entered training before the root cause was pinned down, developer prompt instructions for Codex helped suppress the behavior in the meantime.

The episode is a revealing look at how reinforcement learning can generate unintended consequences at scale and a reminder that even the smallest reward signal, applied in the wrong place, can send a frontier AI chasing goblins across generations of models.