Cloudflare Code Orange Network Resilience: What Changed

Cloudflare’s Code Orange network resilience initiative is now complete, and the changes run deep. The company announced the finish of Code Orange: Fail Small, an intensive two-quarter engineering overhaul to make its infrastructure more resilient, secure, and reliable for every customer.

Two global outages triggered the effort. The first struck on November 18, 2025, and a second followed on December 5, 2025. Together, they exposed critical gaps in configuration deployment, failure handling, and incident response. Cloudflare says the completed work would have prevented both events.

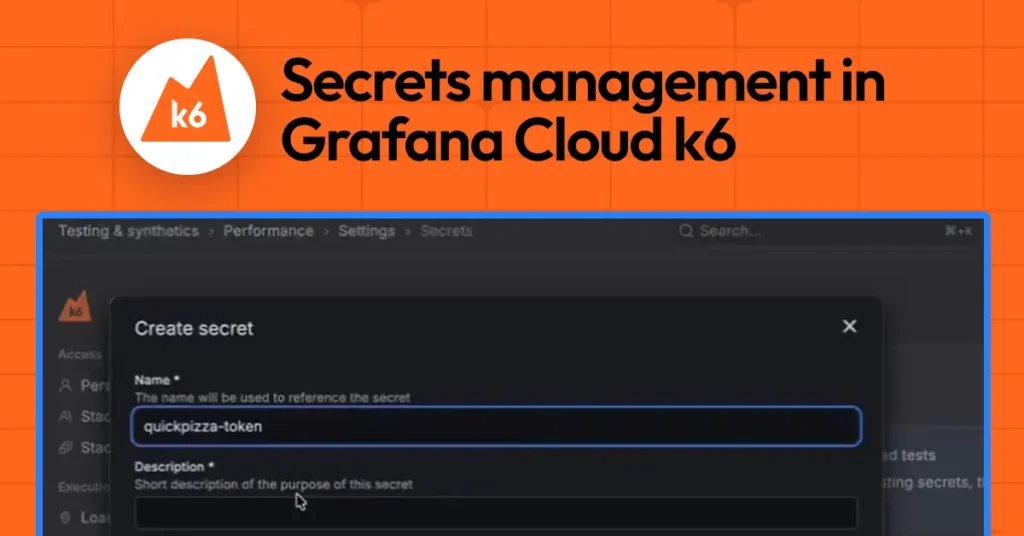

The biggest structural change targets how configuration updates reach the network. Previously, changes could propagate instantly across Cloudflare’s entire global infrastructure. That is no longer the case. Cloudflare built a new internal system called Snapstone. It bundles configuration changes into packages, then releases them gradually with real-time health monitoring at every stage. When Snapstone detects a problem, it rolls back automatically, before most customer traffic faces any impact. This health-mediated deployment approach already applied to software releases. Now it covers all configuration deployments by default.

Beyond safer rollouts, Cloudflare also redesigned how its systems handle failure. Engineering teams audited every critical product for potential failure modes, cut non-essential runtime dependencies, and introduced new fallback behaviours. The guiding principle is clear: use the last known good configuration wherever possible. If that is unavailable, fail in the safest direction, open or closed, based on whether degraded service beats no service. Cloudflare applied this logic directly to the November 2025 Bot Management failure. Under the new setup, a broken classifier update gets caught early, rejected, and the old configuration holds instead.

The company also fixed a structural problem unique to how it runs its own infrastructure. Cloudflare uses its own Zero Trust products internally. So when a network-wide outage hits, it can cut off the very tools engineers need to fix things. Before Code Orange, only a handful of staff could access break-glass emergency pathways. Now, Cloudflare has audited 18 key services and built backup authorisation pathways for each. On April 7, 2026, more than 200 engineers drilled these procedures under simulated pressure to build real muscle memory.

Communication got a structural upgrade too. Cloudflare now runs a dedicated communications team in parallel with incident responders during major events. The team pushes customer updates before most users notice a problem and maintains predictable update intervals, every 30 to 60 minutes, throughout an active incident.

For long-term Cloudflare Code Orange network resilience, the most consequential addition is the internal Codex. Domain experts maintain this living rules repository, and AI-powered code review enforces it automatically. The Codex directly targets the shared failure pattern behind both 2025 outages, code that assumed inputs would always be valid, with no fallback when they were not. One rule now explicitly bans outside of tests. Another requires services to validate upstream dependencies before processing traffic. Engineers catch violations at the merge request stage, not during a production incident.

Cloudflare describes the Codex as an institutional memory flywheel. Incidents generate new rules. Rules generate enforcement. Enforcement raises the quality floor across every team.

The Code Orange network resilience initiative is officially closed. But Cloudflare makes one thing clear, resilience itself is never finished. The standards built through Code Orange now become a permanent part of how the company builds and ships, not a one-time fix.